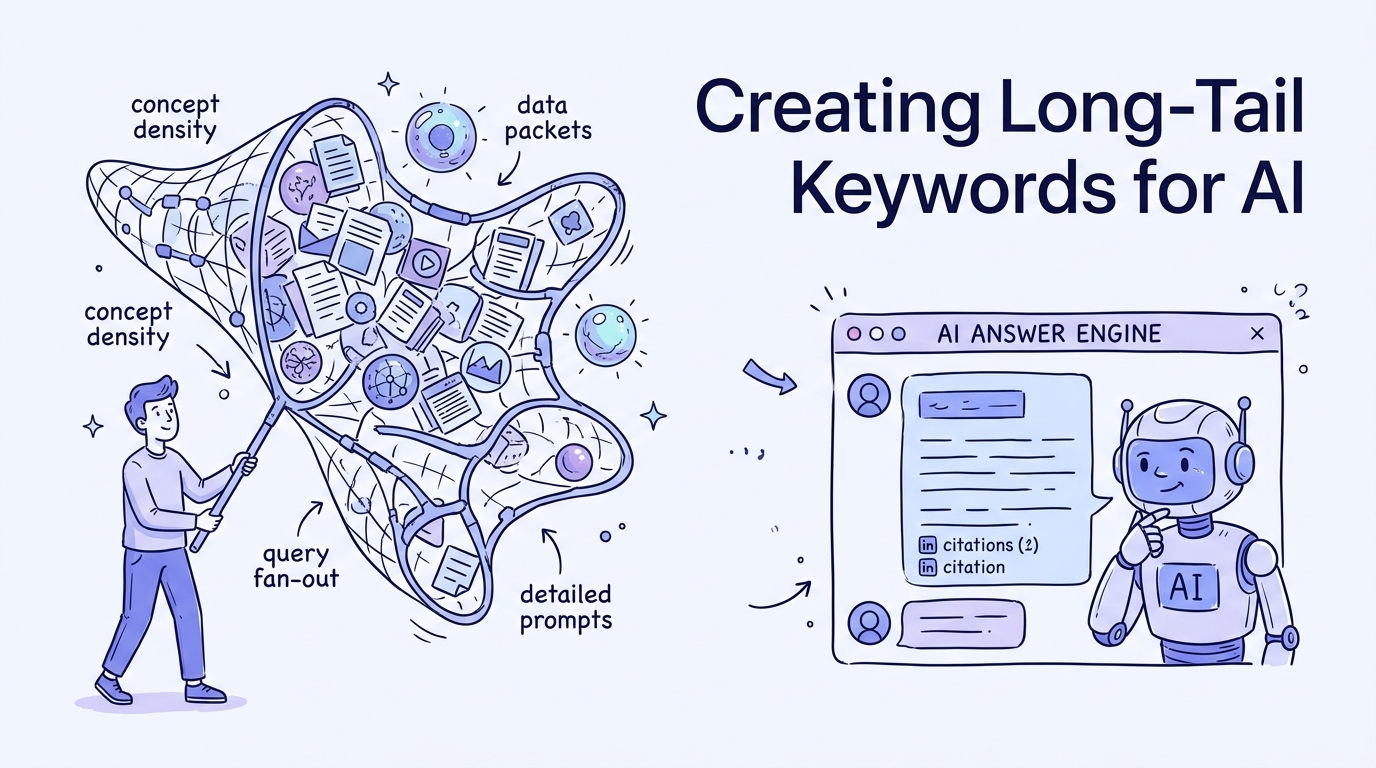

From Keywords to Conversations: Creating Long-Tail Keywords for AI Answer Engines

Short-tail keywords are dying in 2026. This playbook reveals how to capture the 91% of search traffic now driven by complex, multi-sentence AI prompts through semantic optimization.

Traditional search phrases are dying while conversational prompts take the lead. Simple three-word keywords like solar panel efficiency no longer drive clicks because AI engines synthesize the answer before the user can even reach your site.

The keyword is no longer the atomic unit of search. Modern visibility depends on capturing the median AI prompt which now stretches to 20 words or more.

If your strategy is still focused on high-volume head terms, you are competing for a vanishing slice of the pie. In the AI era, 80% of queries are entirely unique, reflecting complex human intent that old-school SEO tools often ignore.

Every interaction in this new landscape is a conversation, not a simple lookup. You are either the source the AI cites or you are invisible to the modern user.

The Bottom Line: Winning AI Citations in 2026

Mastering long-tail optimization requires shifting from volume-chasing to concept-answering. Use this checklist to align your content with how LLMs process information.

- Audit current AI citation rates using specialized visibility tools.

- Map core seed topics to multi-intent conversational fan-outs.

- Structure every page with 40-60 word answer summaries for easy extraction.

- Integrate data-rich statistics to boost citation probability by 32%.

- Deploy advanced Schema markup to verify entity relationships for AI crawlers.

Why AI Search Requires a Long-Tail Pivot

The search demand curve has shifted toward extreme specificity. Since mid-2024, there has been a 7x growth in AI Overview frequency for queries containing eight or more words.

By 2026, over 91% of web searches are categorized as long-tail queries. This shift means that semantic coherence and prompt completeness are now more valuable than keyword density.

Here's a walkthrough that covers the key steps:

300% growth in AI referral traffic is currently flowing to sites that prioritize these deep-intent conversational phrases. Visibility is no longer about winning a single word, but about owning the answer to a complex scenario.

151% increase in unique website citations has been observed for complex B2B queries. This proves that smaller, highly specific pages can outrank giants if they provide better prompt completeness.

Step 1: Audit Your AI Visibility and Baseline

You cannot improve what you do not measure. Start by auditing your current presence in generative engines using tools like Perplexity Pro or Semrush Keyword Overview.

Identify your seed keywords that represent the non-negotiable core of your business. These are the broad topics you want to be known for, even if the AI currently ignores you.

- Run a citation audit on your top ten revenue-driving pages.

- Note which competitors are appearing in SearchGPT or Perplexity for your core terms.

- Reverse-engineer their concept density to see what specific details they provide that you lack.

- Document the gap between your current ranking position and your citation frequency.

Traditional rankings might show you at position three, but a citation audit might reveal the AI is quoting a competitor at position twenty. Winning AI citations means looking beyond the first page of traditional results.

Step 2: Expand Strategy with Query Fan-Out Analysis

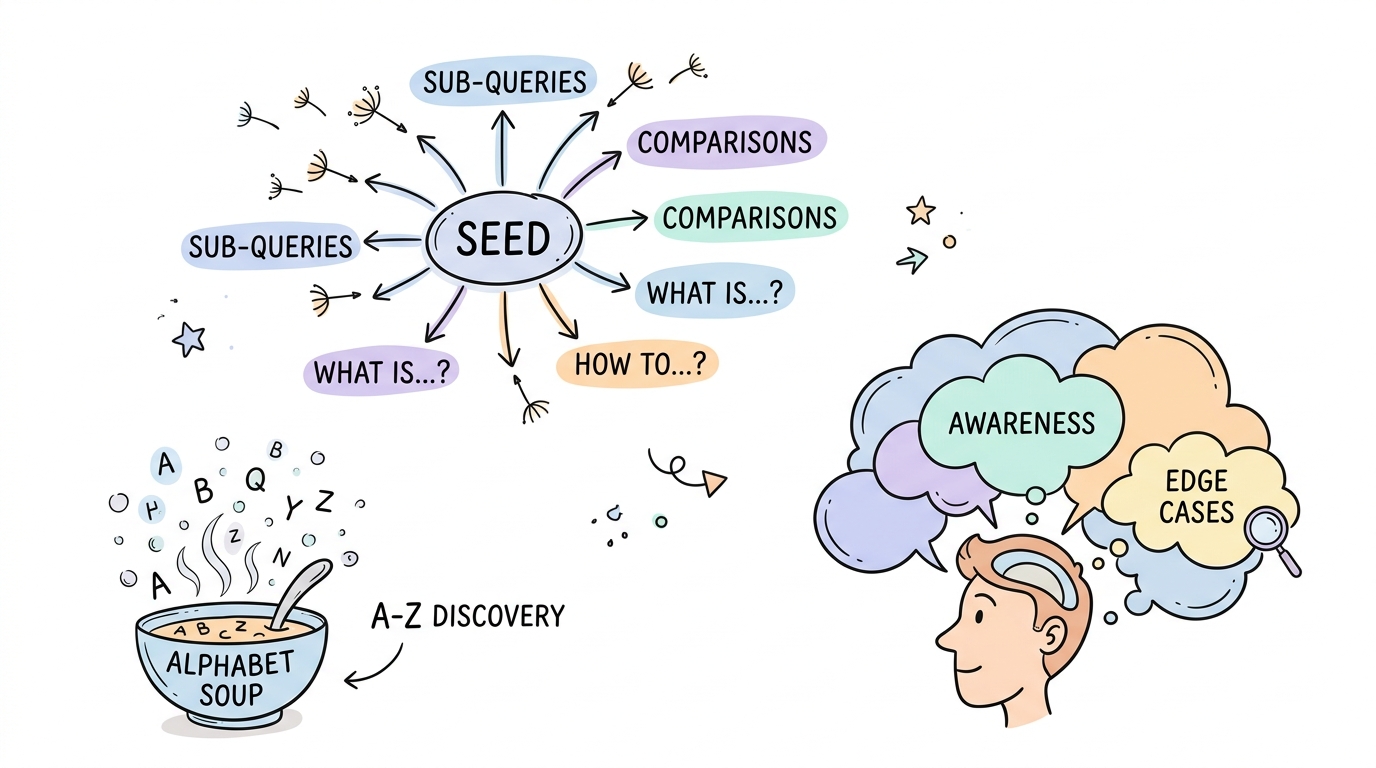

Once you have your seeds, you must expand them into a conversational web. The query fan-out method takes one core question and branches it into related sub-queries, comparisons, and edge cases.

Use the Alphabet Soup method by typing your seed keyword followed by individual letters into AI-enabled search bars. This reveals the actual conversational paths users are taking in real-time.

- Start with a core question like 'How do I choose a mountain bike?'.

- Fan out into intent variations such as 'best mountain bike for heavy riders under $2000'.

- Add edge cases like 'can I use a mountain bike for daily commuting on pavement'.

- Generate customer thinking models using LLMs to predict follow-up questions users will ask next.

This process ensures your content covers the entire prompt lifecycle. Capturing a mindset is more effective than capturing a single click because it positions you as the ultimate authority on the topic.

Step 3: Engineer Content for Prompt Completeness

AI engines look for content that is easy to parse and high in information density. You must structure your pages so the LLM crawler can find the exact answer to a user prompt within seconds.

Structure your content with question-based headers that mirror exactly how people speak or type into a prompt box. Follow every H2 or H3 with a concise 40-60 word answer summary.

Tip: Write your answer blocks as if you are responding to a colleague. Use natural language alignment and avoid marketing fluff that complicates the semantic meaning.

Example

If your header is What are the best lightweight running shoes for wide feet under $150?, your first sentence should be: The best lightweight running shoes for wide feet under $150 are the [Model Name], which features a breathable mesh upper and a wide toe box.

Consider a procurement officer researching specialized B2B software for a global firm. They don't just search for 'cloud security' but enter a 30-word prompt about SOC2 compliance, legacy hardware integration, and latency in Southeast Asia. Because one specific vendor provided a clear answer block addressing all three points, the AI cited them as the primary source while ignoring larger competitors.

Adding hard statistics to these sections can increase your citation rate by over 32%. AI engines prioritize data-rich content because it provides verifiable evidence for the generated answer.

- Define the specific user problem in the header.

- Provide the direct answer in the first paragraph.

- Support the answer with a bulleted list of facts or data points.

- Conclude the section with a logical follow-up prompt the user might ask next.

Step 4: Implement Advanced Schema for Citation Training

Technical SEO is the bridge between your content and the LLM's understanding. Advanced Schema markup acts as a structured training manual for AI crawlers, helping them verify your data.

Use BrightEdge Data Cube X to identify which Schema types are currently triggering the most citations in your niche. Focus on FAQ, Product, and Review Schema to provide the detailed entity relationships AI engines crave.

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [{

"@type": "Question",

"name": "How to optimize solar panel efficiency in cloudy climates?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Use monocrystalline panels and install power optimizers to mitigate shade impact."

}

}]

}

AI Overviews cite content from lower ranking positions at significantly higher rates when structured data is present. By providing a clear roadmap of your page's entities, you make it easy for the AI to trust your site as a source.

- Deploy LLM.txt files to guide how AI crawlers interact with your site architecture.

- Ensure your Product Schema includes specific attributes like dimensions, materials, and shipping times.

- Update your Review Schema to highlight expert consensus and verified user feedback.

The New Metrics: Measuring Long-Tail Success

Traditional metrics like keyword volume and average position are no longer enough. You must pivot to tracking your Citation Rate and AI-driven referral traffic to understand your true reach.

Use the Google Search Console Performance Report to look for queries with ten or more words. These are the phrases where you are likely winning AI-driven traffic today.

- Citation Rate: The percentage of target prompts where your brand appears in the 'Sources' section of an AI answer.

- AI Referral Traffic: The volume of users clicking through from Gemini, Perplexity, or SearchGPT interfaces.

- Brands Mentioned: A report in tools like Semrush One that tracks how often your name appears relative to competitors in generative responses.

- Prompt Completeness Score: An internal measure of how many sub-questions your content successfully answers for a given topic.

Monitoring these new indicators will show you exactly where your long-tail strategy is paying off. High citation rates in Perplexity often lead to a halo effect, increasing your authority in traditional search rankings as well.

Traditional SEO vs. AI Search Optimization

The shift from traditional search to AI engines requires a fundamental change in how you produce and measure content. This table breaks down the core differences in strategy.

| Feature | Traditional SEO | AI Search Optimization |

|---|---|---|

| Query Length******* | 3-4 word keywords | 19-20 word prompts |

| Success Metric******* | Ranking #1 | Earning a Citation |

| Query Uniqueness******* | 15% unique queries | 80% unique queries |

| Content Goal******* | Keyword Density | Concept Density |

| Extraction Method******* | Link Indexing | Semantic Synthesis |

Choosing the right strategy means moving away from the competitive head terms of the past. Focus on the conversational long-tail to ensure your brand remains a primary source in the generative future.

Capturing the Conversation

Capturing the conversation is about more than just matching words. It is about capturing a mindset and providing the most complete answer to a specific user intent.

You no longer need to rank first for a head term to achieve massive visibility in 2026. By leaning into long-tail complexity, you bypass the gatekeepers and speak directly to the AI engines that now guide user decisions.

The goal is simple: be the best answer to the most detailed questions. When you provide that level of value, the citations and traffic will follow naturally.

Start by auditing your current visibility in Perplexity to see where you stand today.